The history of music technology is often told as a story of more power. Better software, better instruments, more effects, more precision. But from the perspective of everyday creators, the real question is usually different: can I turn what I mean into something I can hear? That is where an AI Music Generator becomes valuable. Not because it replaces trained musicians, and not because it magically removes all creative difficulty, but because it gives non-musicians a workable way into song creation. In my view, that is one of the most important things ToMusic represents. It treats music generation as an act of guided expression rather than as a privilege reserved for people already fluent in production tools.

That framing matters because many people who need music are not trying to become professional producers. They are marketers, teachers, founders, video creators, lyric writers, and hobbyists. They may have a strong sense of atmosphere, pacing, or message, but no practical way to translate those instincts into finished audio. Traditional software can feel like a wall to them. A platform built around prompts and lyrics turns that wall into a door. It does not promise mastery. It offers participation.

Why Musical Skill And Creative Need Are Different Things

A person can need custom music without possessing technical music skills. That sounds obvious, yet many creative systems still assume those two things naturally go together.

The Ability To Imagine Music Is Widespread

Most people can tell when music feels wrong for a scene. They can hear when something is too dramatic, too flat, too childish, or too polished. That means many people already have musical judgment at the level of audience response, even if they cannot write chords or arrange instruments.

Production Fluency Is Still A Separate Barrier

Knowing what you want is not the same as knowing how to build it. Traditional production tools often demand early decisions that non-musicians cannot make confidently. That is where language-driven generation becomes useful. It lets users express the result they want before they understand every technical step behind it.

Why ToMusic Feels Designed For Creative Outsiders

What stands out in ToMusic’s official presentation is that the workflow does not begin by asking the user to behave like an engineer. It begins by asking what kind of music they want and whether they want to start from a prompt or from lyrics.

Text Input Makes Intent The First Skill

A creator can start with a description of mood, genre, tempo, and instrumentation. This is important because it rewards clarity of thought rather than technical setup ability. If you can describe what you need, you already have enough to begin.

Lyrics Input Helps Writers Stay In Their Own Strength

Some users are much stronger with words than with sound design. By allowing custom lyrics as input, the platform lets those users stay close to their natural creative advantage while still moving toward a complete song.

The Official Workflow Is Friendly To Beginners

The platform’s process remains short, which is one reason it feels accessible to people outside traditional music production.

Step 1. Describe The Music Or Enter Lyrics

The first step is to provide either a text prompt or custom lyrics. For a beginner, this is a major advantage. There is no need to build an arrangement manually before anything exists.

Step 2. Select The Model And Guide The Output

The next step is to choose among the four models, V1 through V4, while shaping the result through settings such as genre, mood, tempo, instrumentation, length, style tags, and voice characteristics. This gives direction without forcing the user into specialist tools.

Step 3. Generate The Track

Once the request is prepared, the system generates the music. At this point, the user gets what many traditional workflows delay: a fast, testable first version.

Step 4. Save The Stronger Versions

The ability to save outputs in the library matters because beginners often learn by comparison. One version may feel too crowded, another too slow, and another unexpectedly close to the goal. Keeping those versions visible helps the user build taste through iteration.

How The Four Models Help Different Kinds Of Beginners

A common problem with beginner tools is that they oversimplify everything into one path. ToMusic avoids that by giving users several model choices with different strengths.

V1 Works Well For Simpler Experiments

A new user often wants fast feedback rather than maximum complexity. A balanced model with more straightforward behavior can be the right place to learn what kinds of prompts create what kinds of results.

V2 Helps When The Music Needs More Space

Some projects need longer tracks, slower development, or a more sustained mood. A beginner may not know how to build that manually, so a model that supports extended composition becomes especially useful.

V3 Adds Musical Density For Fuller Ideas

Users creating richer content may want more internal movement in the arrangement. A model centered on harmonies and rhythmic ideas can make the output feel more developed without requiring the user to program those layers.

V4 Supports More Ambitious Vocal Work

When the song matters as a song, not just as a backing track, stronger vocal expression becomes important. That is where a more advanced model is likely to feel worthwhile.

What Non-Musicians Can Actually Do With This Kind Of Tool

The interesting question is not whether non-musicians can click generate. The question is whether they can reach meaningful creative outcomes.

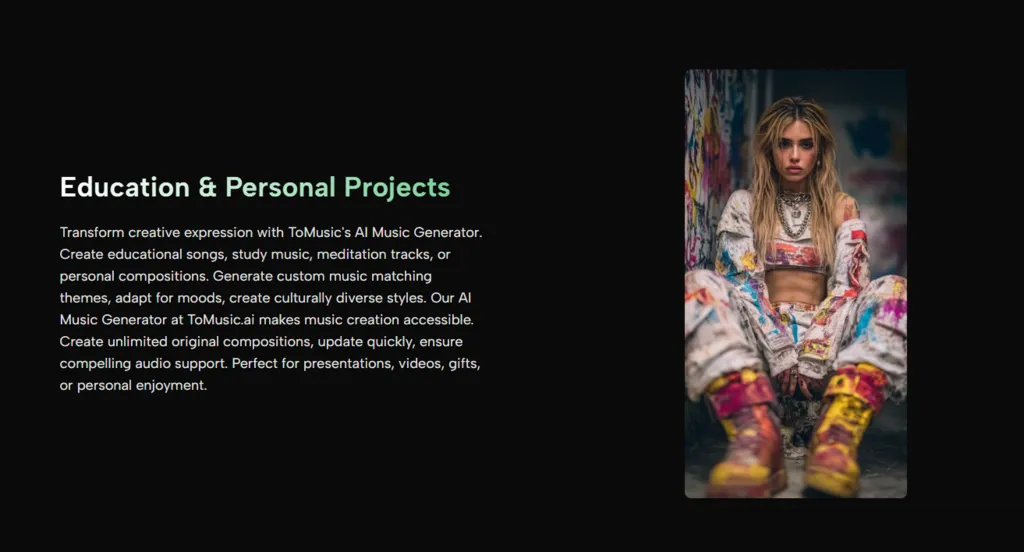

Build Mood-Centered Audio For Content

A social creator or teacher can generate music suited to a lesson, presentation, or video without needing stock music that feels too generic or off-tone.

Test Song Ideas Without A Full Team

A founder, writer, or hobbyist can sketch ideas quickly. Instead of holding a song concept in abstract form, they can hear it and decide whether it deserves more effort.

Explore Different Styles Without Starting From Zero

Because the platform supports multiple models and settings, users can compare emotional directions more efficiently. This helps non-musicians learn through experimentation rather than through theory alone.

| User Type | Common Problem | What ToMusic Makes Easier |

|---|---|---|

| Video creator | Needs custom mood quickly | Prompt-based soundtrack generation |

| Lyric writer | Has words but no melody | Song creation from custom lyrics |

| Teacher or presenter | Needs fitting music without complex tools | Accessible text-driven workflow |

| Small business owner | Wants brand-aligned audio ideas | Style and mood control |

| Beginner creator | Unsure how to start making music | Fast first drafts and saved versions |

| Hobbyist songwriter | Wants to hear multiple song directions | Four-model comparison |

Why Lyrics Matter Especially For Non-Musicians

One of the strongest bridges for non-musicians is language itself. If someone writes well, they already possess a creative tool that can lead to music.

Words Can Carry More Than Meaning

Lyrics do not only tell a message. They imply pacing, tension, repetition, and emotional focus. When those words are turned into a song draft, the writer gets feedback that is far more tangible than silent text on a page.

Hearing The Words Changes Revision Decisions

A line that feels strong when read may sound clumsy when sung. A chorus that looks balanced may feel too long. That is why Lyrics to Music AI is useful not just for creation but for evaluation. It lets writers hear the difference between written confidence and singable clarity.

The Limits Are Part Of The Honest Value

A credible tool is one that can be used well without pretending it solves everything.

Better Inputs Usually Lead To Better Outputs

A broad prompt can create a broad result. A weak lyric can produce a weak song draft. This is not a flaw unique to ToMusic. It is simply part of working with any generative system.

The First Result Is Often A Direction, Not A Destination

Non-musicians may especially need to understand this. The best use of the platform is usually iterative. Generate, listen, revise, and compare. That loop is where confidence grows.

Taste Still Matters More Than Automation

Even if the tool handles the heavy technical lift, the creator still needs to decide what feels right. Automation can provide options. It cannot replace aesthetic judgment.

What This Means For Creative Access

The most compelling thing about a platform like ToMusic is not that it turns everyone into a producer. It is that it gives more people a legitimate place in the making of music.

Creation Starts With Communication

If users can explain what they want, they can begin. That is a major expansion of access because communication is a far more common skill than production fluency.

Beginners Can Learn By Hearing, Not Only By Studying

Instead of absorbing music theory first and hoping to apply it later, users can generate results, compare them, and gradually understand what kinds of choices shape the outcome.

Non-Musicians Gain A Real First Draft Tool

That may be the biggest change of all. For years, many people with valid musical ideas simply had no practical way to hear them. ToMusic gives those people something they have often been missing: a path from intent to sound that does not begin with technical intimidation. In that sense, the platform is not only about music generation. It is about opening the creative process to people who were previously pushed to the edges of it.

Leave a Reply