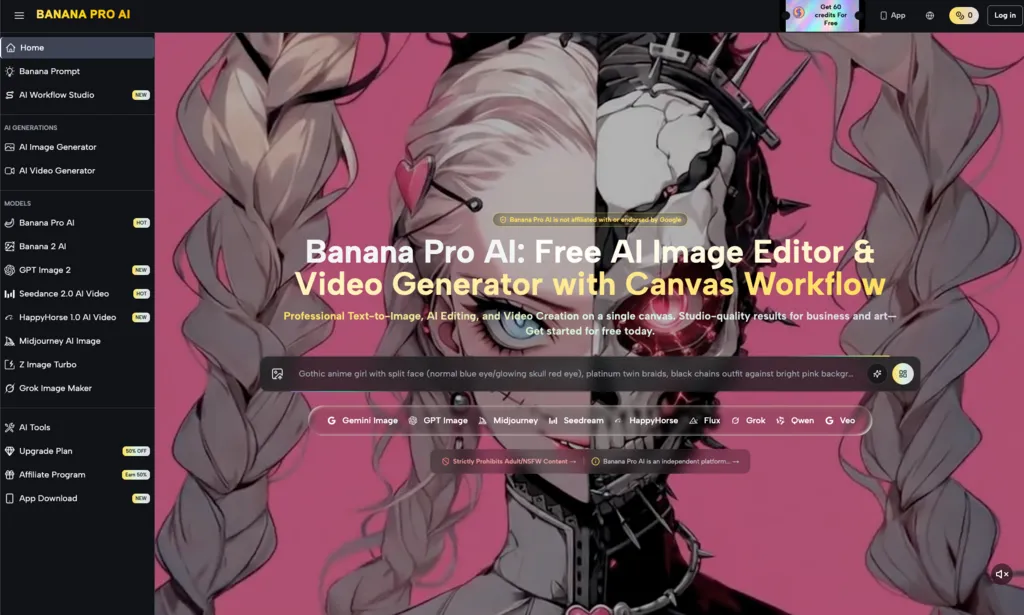

In the early stages of generative media adoption, the focus was almost entirely on “the prompt.” Creators spent hours engineering complex strings of text, hoping a single model would return a perfect, production-ready asset in one go. However, as AI integration matures within professional marketing and creative teams, the “one-shot” philosophy is being replaced by something more pragmatic: model routing.

Professional operators are realizing that using a high-parameter, high-compute model for every task is not just expensive—it is inefficient. Production workflows now increasingly mirror traditional software architecture, where different “tiers” of models are assigned to specific stages of the creative lifecycle. This article explores how teams decide which model handles which stage of the pipeline, focusing on the tactical distribution of tasks across the Banana Pro AI ecosystems.

The Logic of Tiered Model Routing

Model routing is the practice of directing a specific task to the model most capable of handling it at the required speed and cost. In a professional setting, “quality” is not a static metric; it is relative to the stage of the project. A thumbnail for a mood board does not require the same pixel density or anatomical precision as a hero image for a landing page.

When an operator looks at a project, they aren’t just looking for an image; they are looking for a throughput strategy. The decision-making process usually involves three variables: latency, fidelity, and cost (either in credits or compute time). By segmenting these needs, teams can avoid the “overkill” of using a flagship model for basic ideation.

One of the biggest hurdles in this approach is the lack of a standardized language between models. A prompt that works perfectly in a lightweight model may produce entirely different composition or color grading in a more robust one. This “prompt drift” is a significant limitation that operators must account for by building flexible, rather than rigid, prompt templates.

Stage 1: Ideation and the Utility of Nano Banana

The beginning of any creative project is characterized by volume. You need fifty variations of a concept to find the one that resonates. This is where high-fidelity models actually become a hindrance. Waiting sixty seconds for a high-resolution render just to realize the composition is wrong is a waste of resources.

The Nano Banana model serves as the “drafting pencil” of the AI workflow. Its primary value lies in its speed and its ability to interpret broad spatial relationships without getting bogged down in texture or lighting details. Operators use this tier for:

- Compositional Sketching: Determining where the subject sits in the frame and how the negative space is utilized.

- Color Palette Testing: Rapidly cycling through different seasonal or brand-aligned color schemes.

- Rapid Iteration: When a client is in the room (or on a call) and you need to pivot from a “brutalist” aesthetic to a “minimalist” one in seconds.

At this stage, the goal is not a finished product. It is a blueprint. The Nano Banana Pro framework is designed to handle this high-velocity output, allowing creators to burn through ideas without the cognitive load of managing heavy compute costs.

Stage 2: High-Fidelity Refinement and the AI Image Editor

Once a direction is established in the ideation phase, the workflow moves into the refinement stage. This is where the requirements shift from quantity to quality. Here, the operator needs a model that understands complex textures, realistic lighting, and specific brand guidelines.

This is the domain of the Banana Pro model. In a production pipeline, this model acts as the primary engine for “hero” assets. Unlike the drafting phase, the focus here is on nuance. An operator might take a low-resolution concept from the previous stage and use it as an image-to-image reference.

The transition from a draft to a high-fidelity image is rarely seamless. Often, the AI Image Editor tools within the platform are required to fix specific “hallucinations” that occur during the upscaling or refinement process. For example, a model might perfectly capture the lighting of a product but struggle with the typography on a label. Professional creators don’t expect the model to be perfect; they expect the model to be “editable.”

It is important to maintain a level of skepticism regarding “zero-shot” perfection at this stage. Even the most advanced models occasionally struggle with anatomical consistency or the physics of complex reflections. The operator’s role is to identify these failures early and use targeted in-painting or localized adjustments rather than re-rolling the entire prompt.

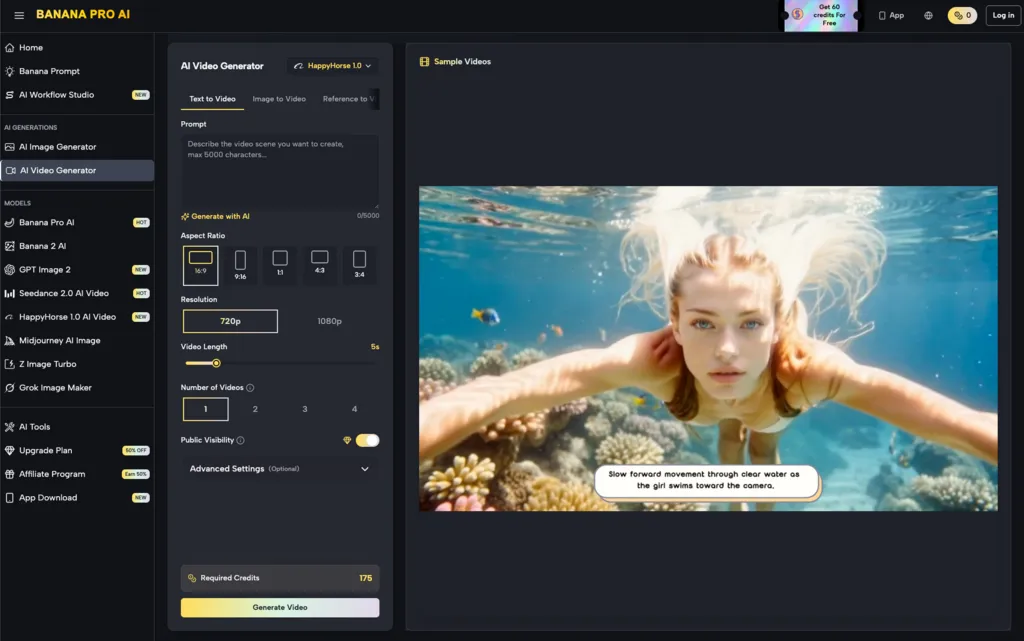

Stage 3: Transitioning to Motion and Video Production

The final and most complex stage of the routing process involves adding the dimension of time. Moving from a static image to a video asset introduces a host of new technical challenges, most notably temporal consistency. If a character’s hair changes length between frame 1 and frame 60, the illusion is broken.

When the workflow dictates a move into motion, the task is routed to Banana AI specifically for its video generation capabilities. This stage is often the most resource-intensive. Operators typically do not start with video; they use the high-quality static assets generated in the previous step as “first-frame” references.

This “image-to-video” routing is a critical efficiency gain. By establishing the visual “truth” (the lighting, the subject, the environment) in a static image first, the video model has a concrete anchor to work from. This reduces the randomness that often plagues text-to-video workflows.

However, a significant limitation remains: the lack of granular control over physics. While we can control the camera movement or the general action, predicting exactly how a fabric will drape or how water will splash remains a matter of trial and error. Professional operators mitigate this by generating multiple short clips and “stitching” the best physical simulations together in post-production.

Managing Resource Allocation and Technical Debt

Choosing between Nano Banana and the more advanced versions is also a business decision. For an agency, every “re-roll” on a high-tier model carries a marginal cost. Over a month of production, these costs compound.

Effective model routing involves setting “thresholds” for when to move up a tier. A common internal rule of thumb is the “Three-Roll Rule”: if you cannot get the composition right after three iterations in a high-fidelity model, drop back down to a lower-tier, faster model like Nano Banana Pro to diagnose the prompt’s structural issues.

This prevents “technical debt” in the creative process—where hours are spent trying to fix a fundamentally flawed prompt in a model that takes too long to render. By shifting the heavy lifting of “discovery” to the faster, lighter models, the overall production timeline is compressed.

The Human in the Loop: The “Model Operator” Role

As these tools become more segmented, the role of the creator is shifting toward that of a “Model Operator.” This person is less about “artistic intuition” in the traditional sense and more about “workflow orchestration.”

The operator must know the “breaking points” of each model. They need to know that one model might be excellent at landscapes but poor at human hands, while another might excel at stylized 3D renders but struggle with photorealism. This specialized knowledge allows them to route tasks away from a model’s weaknesses and toward its strengths.

There is currently no “autopilot” for this. While some systems attempt to auto-route prompts based on keyword analysis, the results are often underwhelming. Human judgment is still required to look at a mid-stage render and decide if it’s worth pushing into the video generation phase or if it needs another pass through the refinement engine.

Final Thoughts on Pipeline Integration

The future of production AI isn’t a single “god-model” that does everything. It is a modular system where the right tool is applied at the right time. By understanding the specific roles of the Nano Banana, the flagship high-fidelity tools, and the video generation engines, creators can build a pipeline that is both sustainable and high-performing.

Success in this environment requires a departure from the “magic button” mindset. It demands an editorial eye, a practical approach to resource management, and a willingness to navigate the inherent uncertainties of generative technology. The goal is not just to create, but to create with a level of control and predictability that professional standards demand.

Leave a Reply